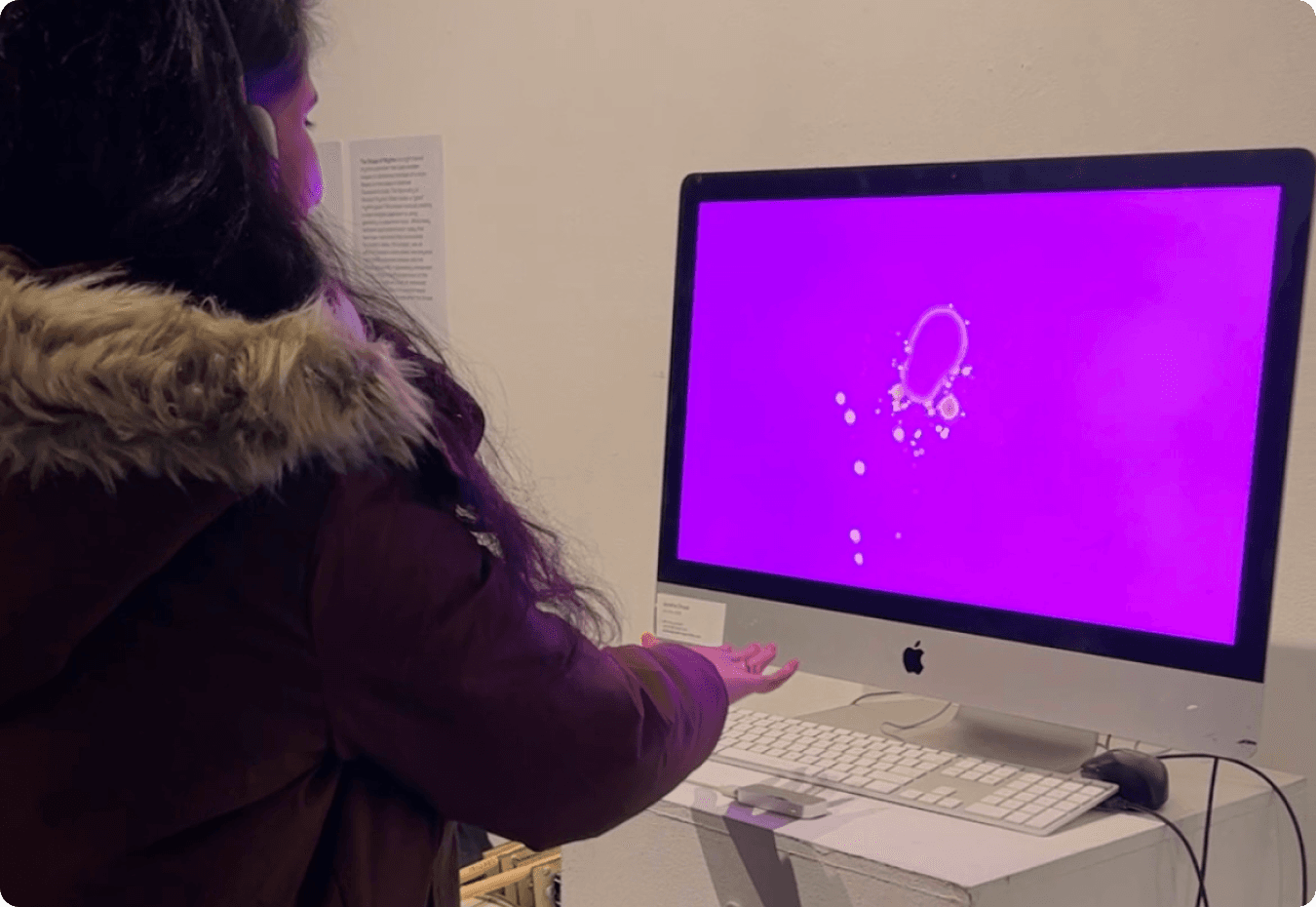

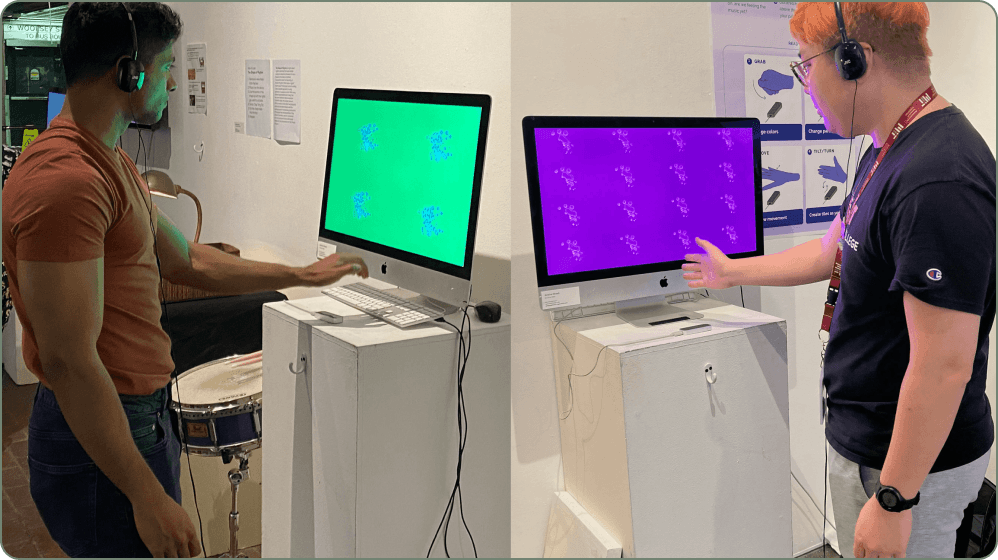

An installation of an interactive audio visual experience where the visitors could use their gestures to manipulate visuals to the sound of music. It was installed in CyberArts Gallery in Boston for the Fresh Media show.

Year

2022

Role

Interaction Designer /

Creative Technologist

Industry

Immersive media

Team

Individual Contributer

Timeline

3months

Project Context

Amidst a snacking session interrupted by an unavoidable YouTube ad, I pondered the inconvenience of interacting with technology while hands were messy. This sparked my exploration into Natural User Interfaces (NUIs) and Graphical User Interfaces (GUIs), seeking seamless interaction without physical contact.

But why stop at functionality? I delved into gestural interfaces, envisioning a world where drawing and VJ-ing were possible with mere gestures, aiming to infuse surprise and delight into user experiences.

A Glimpse at the process - bringing a speculative idea to life in a prototype

Research and DJ Insights

PHASE 1

Crafting Audio Visuals

PHASE 2

Mapping Gestures

PHASE 3

Gallery Installation/Testing

PHASE 4

Speculating the Future

PHASE 5

I started experimenting with (fun) gesture based interfaces

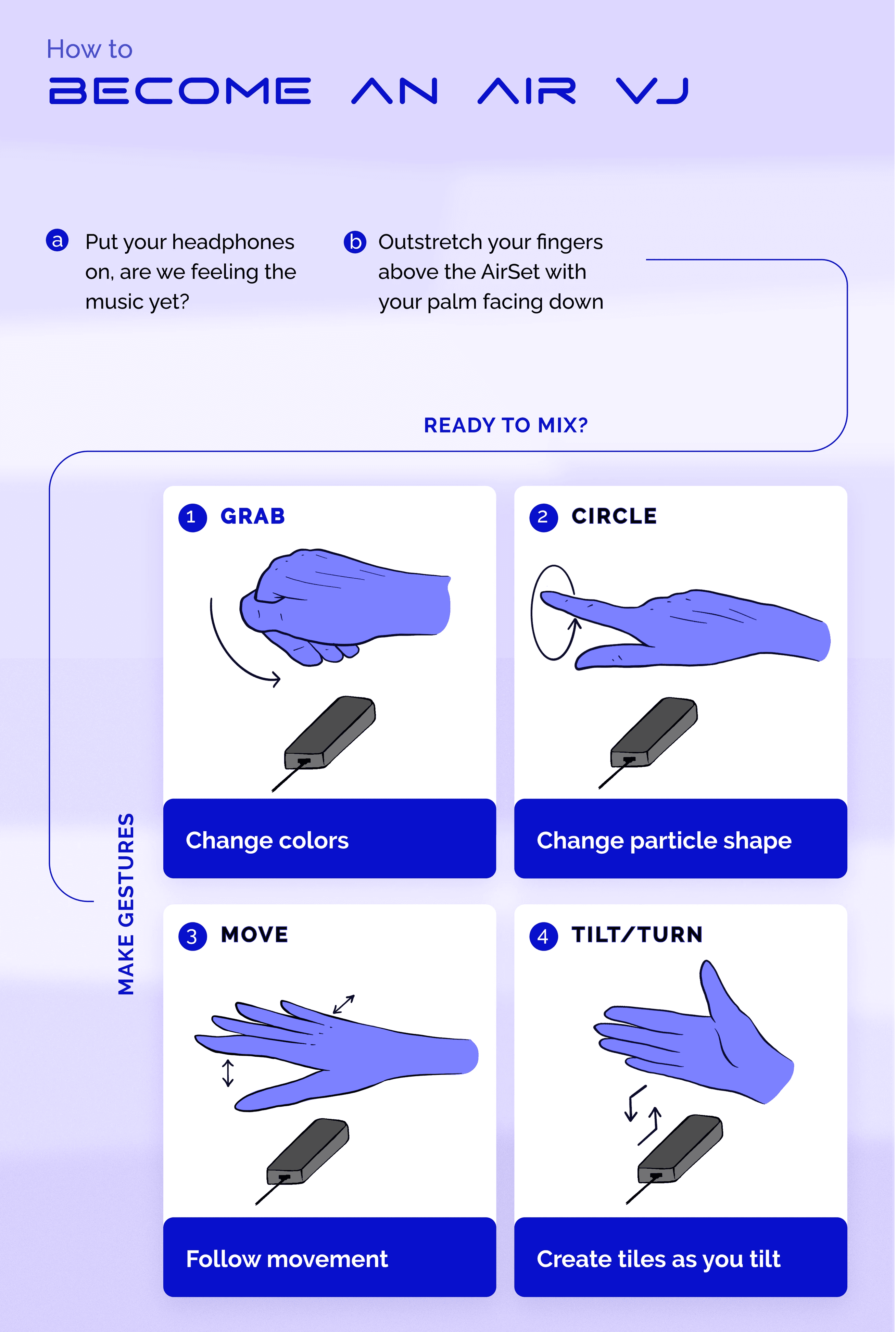

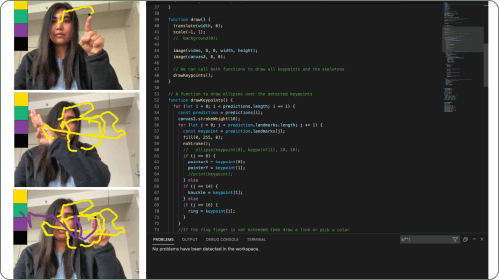

Using the handpose library from ml5.js, I developed an interactive system where users could manipulate particles and generate music simply using gestures.

Technology and Design Execution

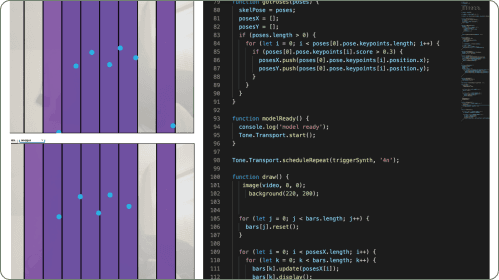

I made it work using a leap motion controller to be able to detect gestures which would then talk to TouchDesigner to control the audio visuals being generated in TD. Music played a huge part in this installation, the genre of choice, Techno!

What does the future hold?

Enhanced Video Calls

APPLICATION 1

Users can effortlessly manage video calls through natural hand gestures like waving, pointing, and pinching. Enabling settings such as muting, camera angles, and screen sharing.

Safe Vehicle Controls

APPLICATION 2

Allowing drivers to control functions without diverting their attention from the road, like changing music, or navigating menus, promoting road safety, reducing distractions.

Industrial Machinery Control

APPLICATION 3

Workers can operate these machines using hand gestures, eliminating the need for physical contact, which could lead to burns from hot surfaces or potential injuries.

Medical Equipment Operation

APPLICATION 4

Healthcare professionals can use hand gestures to adjust settings on various devices. This reduces physical interaction with equipment, reducing contamination and improving hygiene in medical settings.

Interactive Presentations

APPLICATION 5

Speakers and presenters can navigate through slides, zoom in on content, and engage with the audience using natural hand movements. Creating dynamic and engaging presentations.

Gaming and Entertainment

APPLICATION 6

Gamers can immerse themselves in interactive experiences, controlling characters or objects in virtual worlds with natural hand movements. This makes gameplay enjoyable, providing a deeper level of engagement.

What's the next improvement?

This technology holds immense potential for creative expression and innovation across various sectors, promising a future where interactions with technology are more natural and intuitive.